Andrew Liu*, Richard Tucker*, Varun Jampani,

Ameesh Makadia, Noah Snavely, Angjoo Kanazawa

ICCV 2021 Oral

[Arxiv]

| Andrew Liu* | Richard Tucker* | Varun Jampani |

| Ameesh Makadia | Noah Snavely | Angjoo Kanazawa |

ICCV 2021 (Oral)

| [Colab Demo] | [Github] |

| Input | Generated Video |

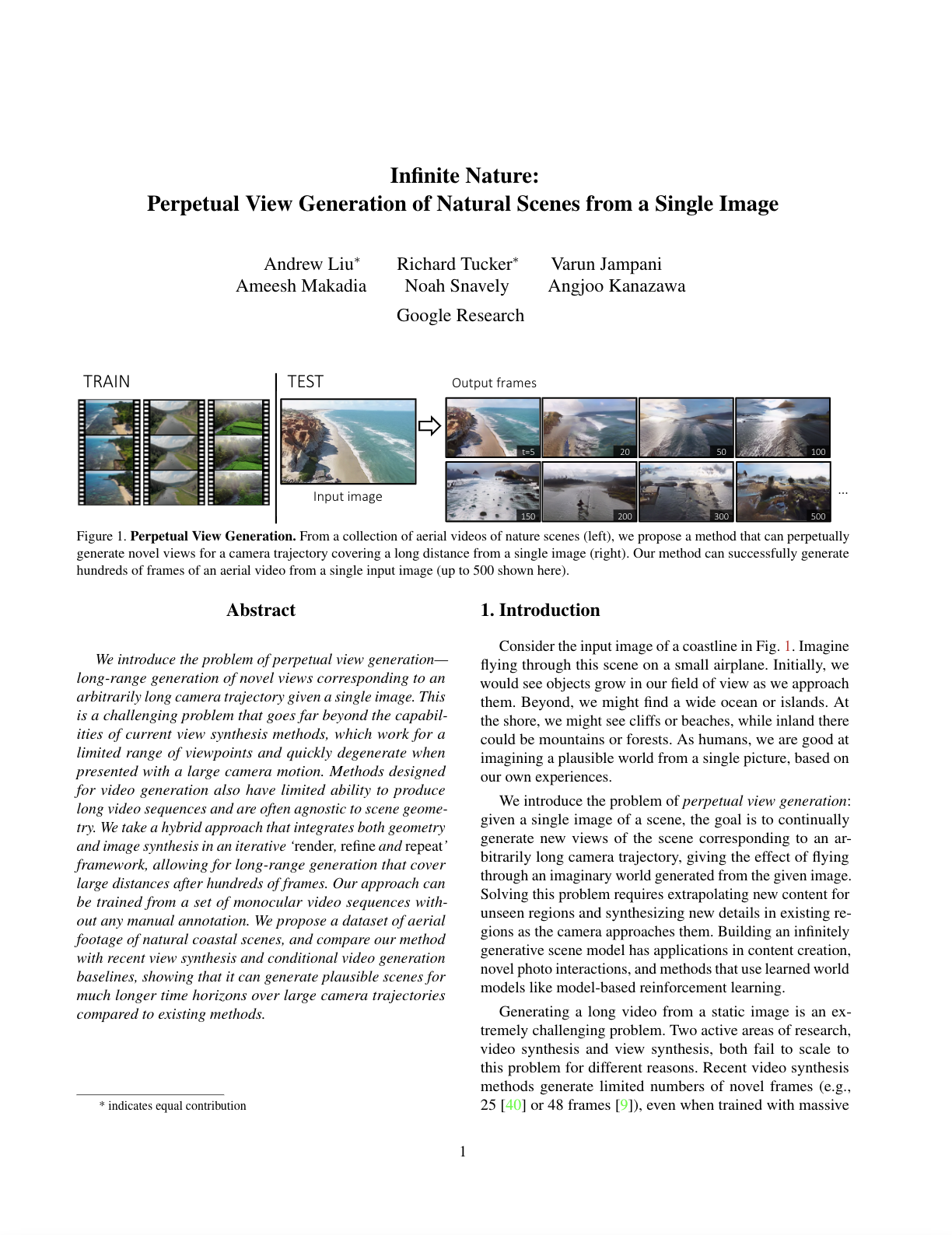

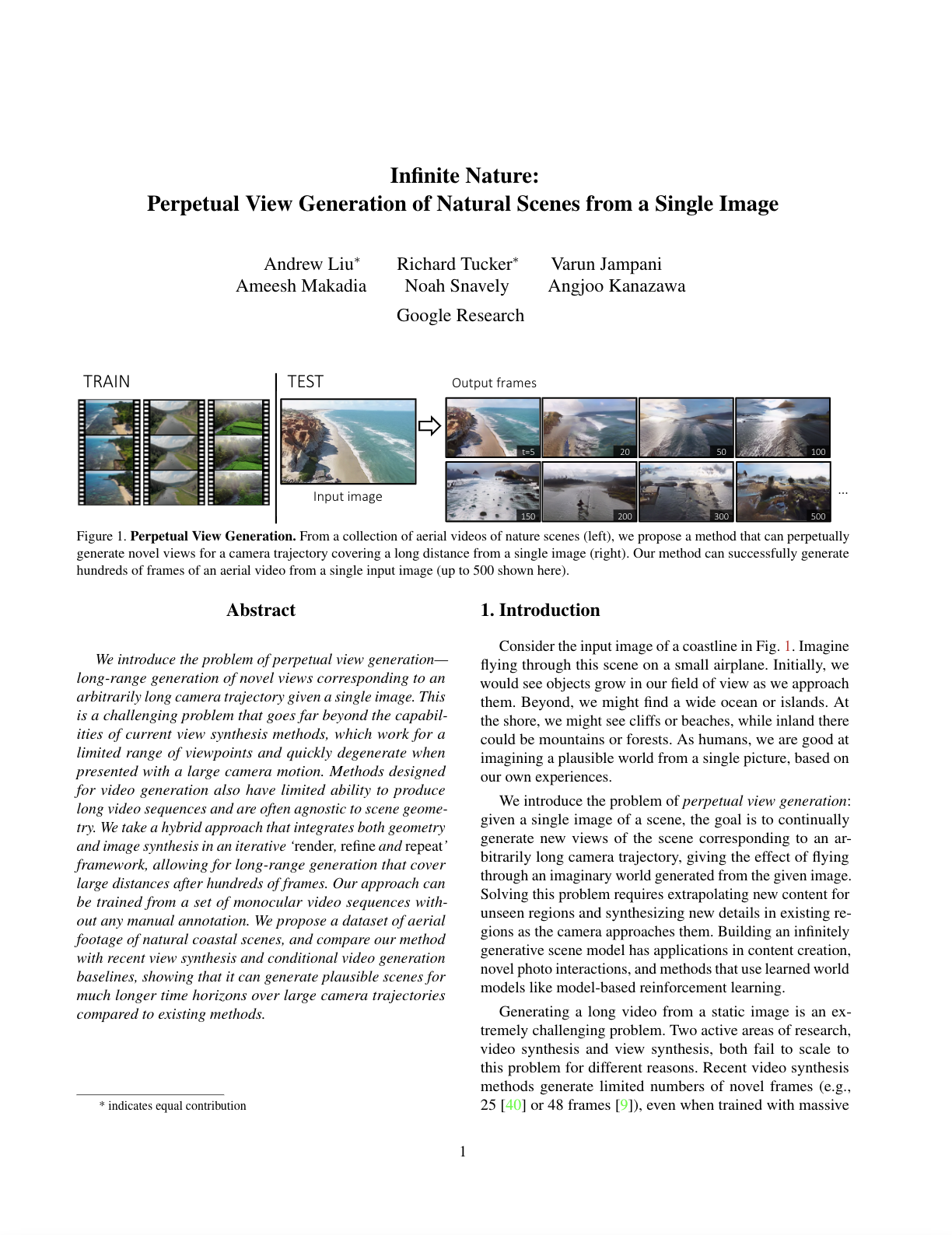

We introduce the problem of perpetual view generation—long-range generation of novel views corresponding to an arbitrarily long camera trajectory given a single image. This is a challenging problem that goes far beyond the capabilities of current view synthesis methods, which work for a limited range of viewpoints and quickly degenerate when presented with a large camera motion. Methods designed for video generation also have limited ability to produce long video sequences and are often agnostic to scene geometry. We take a hybrid approach that integrates both geometry and image synthesis in an iterative render, refine, and repeat framework, allowing for long-range generation that cover large distances after hundreds of frames. Our approach can be trained from a set of monocular video sequences without any manual annotation. We propose a dataset of aerial footage of natural coastal scenes, and compare our method with recent view synthesis and conditional video generation baselines, showing that it can generate plausible scenes for much longer time horizons over large camera trajectories compared to existing methods.

In order to train our model, we identified thousands of aerial drone videos of different coastline and nature scenes on YouTube. We run structure-from-motion to get camera poses and release this data in the same format as RealEstate10k. Shown below are some randomly selected example videos that we identified.

We also release ACID-Large, a dataset with more training sequences than ACID resulting from using additional search terms and less stringent filtering.

[Download ACID-Large]